Why Your RAG Implementation Will Fail Without a Data Readiness Audit First

The uncomfortable truth every enterprise AI team needs to hear: your RAG system's output quality is permanently capped by your data quality. No matter how powerful your LLM, how sophisticated your retrieval architecture, or how elegant your prompts — if the underlying data isn't ready, your RAG deployment will stall, hallucinate, or quietly deliver wrong answers in production.

→ Check your data architecture scoreEvery month, another enterprise data team tells us some version of the same story. They spent six months selecting an LLM. Another three months building a RAG pipeline on top of Salesforce, SharePoint, and their document management system. They showed the demo at the executive all-hands — it looked impressive. Then they deployed it to real users, and within two weeks the support tickets started coming in.

"It gave me last year's pricing." "It cited a policy we retired in 2024." "It hallucinated a feature we don't even have."

None of those problems were the model's fault. None of them were prompting errors. Every single one traced back to the same root cause: the data was never audited for AI readiness before the build began.

These numbers aren't anomalies. They describe the default outcome when enterprises deploy AI on unprepared data. And the painful irony is that most of the damage is entirely avoidable — but only if you catch it before you build, not after.

The Core Problem: RAG Amplifies Your Data's Weaknesses, Not Just Its Strengths

RAG (Retrieval-Augmented Generation) is a fundamentally data-dependent architecture. Where a traditional application can paper over messy data with business logic and validation layers, a RAG system exposes your data's flaws directly to a language model — and the model faithfully reflects what it retrieves.

Think of it this way: RAG is essentially a very sophisticated search engine paired with a very articulate writer. The writer can only communicate what the search engine finds. If the search engine retrieves the wrong version of a document, the writer will explain the wrong version confidently. If the search engine can't find a document because the metadata is broken, the writer will tell your user that no such information exists — even when it clearly does.

The hard ceiling: A 2025 medical study found that when a RAG chatbot was restricted to high-quality, curated content, hallucinations dropped to near zero. When the same model was given unvetted baseline data, it fabricated responses for 52% of questions that fell outside its clean reference set. Your data quality is literally your hallucination rate.

And yet, most enterprises assess their readiness for RAG the same way they assess readiness for any software project: Does the data exist? Can we access it? Is there an API? These questions are necessary but nowhere near sufficient. What actually determines whether a RAG deployment succeeds is a different, deeper set of criteria — and the only way to know where you stand is a structured data readiness audit.

The 7 Data Readiness Signals That Determine Your RAG's Fate

Through working with enterprise clients across healthcare, finance, education, and manufacturing, DeepRoot's data science team has identified the seven most critical readiness signals that predict RAG performance before a single line of pipeline code is written.

-

01

Document Version Integrity

Does every data source have a single, authoritative version of truth? In most enterprises, the same document exists in 3–5 versions spread across SharePoint, email archives, and local drives. When a RAG system ingests all of them, it retrieves whichever version is semantically closest to the query — not the most current. A safety manual from 2022 and a safety manual from 2025 can coexist in your vector store, and your RAG will pick between them arbitrarily. Version governance isn't a nice-to-have for RAG — it's a hard prerequisite.

Critical Failure Risk -

02

Metadata Richness and Consistency

RAG retrieval quality is directly proportional to the quality of your metadata. When documents lack consistent date fields, department tags, content-type labels, and update timestamps, the vector store has no reliable way to prioritise recency or relevance. A query for "current procurement policy" returns results sorted by semantic similarity alone — and the most semantically similar document is often not the most current one. Metadata auditing is unglamorous work that has enormous impact on retrieval precision.

Critical Failure Risk -

03

Semantic Alignment with User Query Language

Your internal documents use your organisation's internal vocabulary. Your users ask questions in natural, conversational language — or the language of their role, their industry, their level of expertise. When these vocabularies don't overlap, retrieval fails silently: the relevant document exists, but the embedding similarity between the query and the document is too low for it to surface. Semantic alignment assessment means mapping the language patterns in your data against the expected query patterns of your user population — and identifying the gaps before deployment.

High Impact -

04

Access Control Metadata Preservation

Enterprise documents carry permissions — who can see what is governed by IAM, RBAC, and document-level classification. When documents are ingested into a vector store, permissions metadata is frequently stripped in the process. This is not just a security concern — it is a compliance catastrophe. HR records become retrievable by anyone who asks the right question. Executive compensation documents surface in responses to junior employee queries. A rigorous readiness audit includes an access control mapping exercise to verify that document-level permissions survive the ingestion pipeline intact.

Critical Failure Risk -

05

Cross-System Schema Coherence

Most enterprise RAG systems draw from multiple sources: a CRM, a document management platform, a data warehouse, email archives, structured databases. Each source has its own schema, its own data types, its own field naming conventions. When a RAG system tries to reason across these sources — answering a question that requires pulling context from Salesforce and SharePoint simultaneously — schema incoherence causes retrieval confusion and corrupted synthesis. Schema mapping and normalisation across sources is a readiness dimension that many organisations don't discover they've skipped until they're debugging production.

High Impact -

06

Data Freshness and Update Frequency Alignment

RAG systems retrieve from a knowledge base that was ingested at a point in time. If your underlying data changes faster than your re-ingestion cadence, your RAG system will serve stale answers — confidently and without any indication that the information is outdated. This is particularly dangerous in regulated industries where policies, compliance requirements, and product specifications change frequently. A readiness audit must map the update velocity of every data source against your intended re-ingestion schedule and flag the high-velocity sources that require real-time or near-real-time pipeline handling.

Deployment Risk -

07

Governance and Compliance Lineage

Increasingly, regulated industries must be able to explain every AI-generated output — who retrieved what, from which source, under which authorisation, at which timestamp. This requires your data to carry provenance metadata from the moment of creation through every transformation in the RAG pipeline. Without governance lineage built into your data architecture from the start, you cannot produce audit trails, you cannot comply with emerging AI regulations, and you cannot diagnose retrieval failures systematically. Governance readiness is the foundation that determines whether your RAG deployment can scale safely beyond a pilot.

Deployment Risk

Is your data ready across these 7 dimensions?

DeepRoot's DRI audit scores your enterprise data across all seven signals in under 2 weeks — no data movement required.

What Happens When You Skip the Audit: Real-World Cost Scenarios

The consequences of deploying RAG on unprepared data aren't just technical. They have quantifiable business costs that tend to surface at the worst possible moments.

The mid-project discovery penalty. Gartner analysis indicates that discovering data infrastructure problems mid-implementation adds 3–6 months to deployment timelines. At enterprise day rates for AI engineering talent, that translates to $100,000–$380,000 in unplanned remediation costs — for a medium-to-large deployment. The same remediation work costs a fraction of that when scoped and planned before the build begins.

The silent degradation problem. A RAG system that works on 10,000 documents in staging will not automatically maintain quality when it scales to 10 million documents in production. At scale, retrieval precision silently degrades. The system remains fast — it just becomes increasingly wrong. Without the baseline data quality measurements that a readiness audit provides, there's no way to detect this degradation or attribute it to its root cause.

"Organizations that skip a formal infrastructure audit routinely overspend the rest of their implementation by 40–60%."

Deloitte Enterprise AI Implementation Survey, 2026The compliance exposure scenario. In healthcare, finance, and legal contexts, a RAG system serving stale or unauthorised data is not just a technical failure — it is a compliance failure. The reputational and regulatory costs of a single high-profile incident dwarf the cost of any readiness preparation investment. When the EU AI Act and other emerging frameworks mandate explainability and audit trails, organisations without data governance lineage will find themselves unable to comply retroactively.

Comparing Your Options: DIY Audit vs. Structured DRI Assessment

When organisations recognise they need a data readiness process, they typically consider three approaches. Here's how they compare on the dimensions that matter for RAG deployment:

| Approach | Time Required | Coverage | Quantified Score | Actionable Priorities | No Data Movement |

|---|---|---|---|---|---|

| Manual checklist (internal team) | 6–12 weeks | Partial — depends on team | ✗ | ⚠ Qualitative only | ✓ |

| Data engineering consultancy audit | 8–16 weeks | Comprehensive | ⚠ Varies | ✓ | ✗ Data often shared |

| DeepRoot DRI Assessment | 2 weeks | 7-dimension automated + human | ✓ Scored 0–100 | ✓ Prioritised roadmap | ✓ Metadata only |

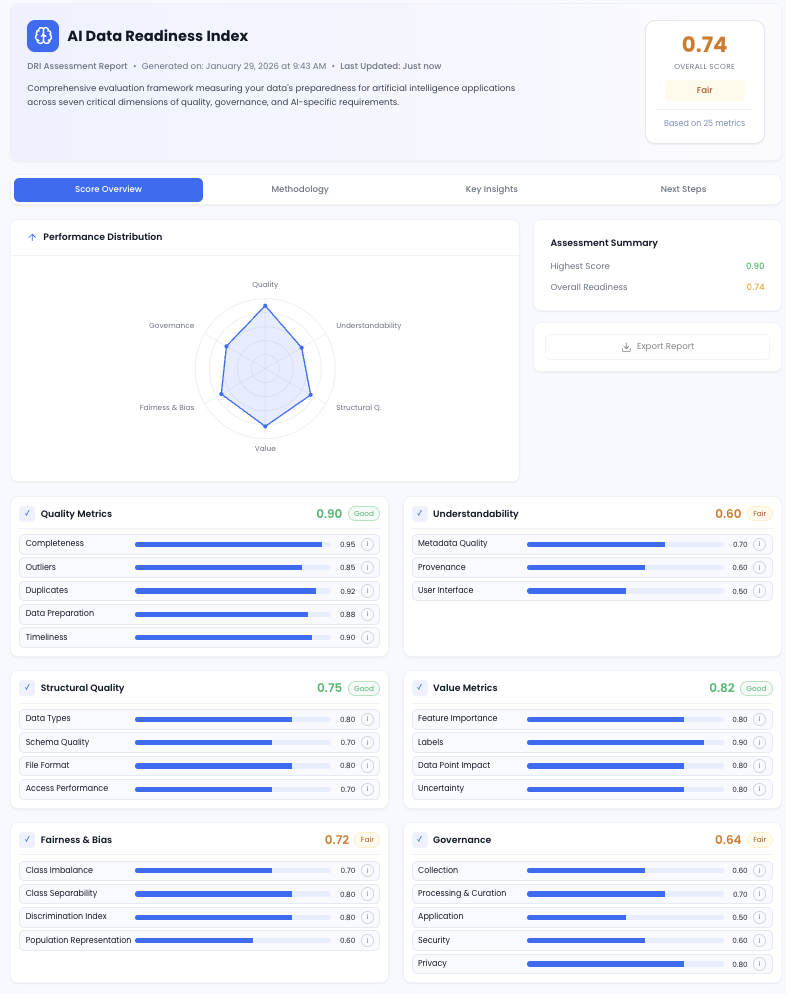

The DeepRoot DRI (Data Readiness Index) is a multi-dimensional scoring engine that was built specifically for this problem. It ingests metadata from your enterprise systems — Salesforce, SharePoint, Oracle, Snowflake, Workday, MongoDB, and more — without moving a single byte of your actual data. It applies automated assessment across seven readiness dimensions and produces a scored readiness report with diagnostic insights and a prioritised remediation roadmap.

The Data Readiness Index (DRI)

Your quantified, confidence-based view of how AI-ready your enterprise data actually is — before you commit to a RAG build.

No data movement · Results in 14 days · Prioritised action roadmap included

A Practical Readiness Framework: What to Do Before You Build

If you're planning a RAG deployment — or troubleshooting one that isn't performing — here's the sequence that consistently produces the fastest path from data chaos to production-quality AI.

Step 1: Map Every Data Source You Intend to Include

Before any technical work begins, document every system that will feed your RAG knowledge base. For each source, record: the system of record, the primary data owner, the update frequency, the approximate data volume, and the access control model. This sounds obvious. Almost no one does it before they start building. Almost everyone wishes they had.

Step 2: Run an Automated Readiness Scan

Use an automated tool — DeepRoot's DRI or similar — to profile the data in each source system against the seven dimensions described above. You want quantified scores, not qualitative impressions. "The data quality seems okay" is not actionable. "Metadata completeness is 47% across your SharePoint tenant, with 68% of documents missing update timestamps" is actionable.

Step 3: Prioritise by Feasibility, Not by Ambition

DeepRoot's AI Compass tool was built for this step: taking your DRI scores and mapping them against potential RAG use cases to identify which are ready to deploy now versus which require remediation first. Enterprises consistently try to deploy the most ambitious use case first. The ones that succeed deploy the most ready use case first — build confidence, demonstrate value, then expand.

DeepRoot deployment principle: Start with the data sources that score highest on the DRI. Deploy your first RAG use case on clean, well-governed, semantically rich data. Demonstrate production value. Then use that credibility to fund the remediation work required to expand to lower-scoring data sources. This approach has a higher success rate than fixing everything first — which rarely happens before budgets run out.

Step 4: Instrument Before You Deploy

Every enterprise RAG system needs monitoring from day one: retrieval precision metrics, hallucination rate tracking, user satisfaction signals, and source attribution logs. These aren't post-deployment improvements — they're the feedback loops that allow you to detect data quality degradation before users do. Building them in from the start requires that your data architecture was designed with observability in mind. A readiness audit identifies whether your current architecture supports this, and what needs to change if it doesn't.

The Right Question to Ask Before Your Next AI Investment

The enterprise AI landscape in 2026 is defined by a growing gap between organisations that are successfully moving AI from pilot to production and those that are stuck in an expensive loop of demos that don't scale. The differentiator, consistently and across industries, is not which LLM they chose or how sophisticated their retrieval architecture is.

It's whether they knew — quantifiably, dimensionally, actionably — how ready their data was before they started building.

The organisations that answer "yes" to that question are the ones reporting 50% reductions in document processing costs, 40% faster deployment timelines, and 95% automation accuracy in production. The organisations that skip it are the ones writing post-mortems about why their AI pilot failed to scale.

Before your next RAG investment, before your next AI architecture decision, ask one question: what is our DRI score? If you don't know the answer, that's where to start.

Know Your Data's AI Score Before You Build Anything

DeepRoot's DRI audit profiles your enterprise data across 7 readiness dimensions — no data movement, results in 14 days, full prioritised roadmap included.

Used by enterprise teams in Healthcare · Finance · Education · Manufacturing

Enterprise RAG & Data Readiness: Common Questions

Why do enterprise RAG implementations fail? +

Enterprise RAG implementations fail primarily due to poor data quality and lack of data readiness before deployment. Research shows 95% of AI pilots underperform because the underlying data is incomplete, inconsistently structured, lacks proper metadata, or isn't semantically aligned with the queries the RAG system needs to answer. Problems that would be visible in an upfront audit — duplicate documents, stripped permissions metadata, schema mismatches, stale data — surface instead as production failures after months of build investment.

What is a Data Readiness Index (DRI) for AI? +

A Data Readiness Index (DRI) is a scored assessment that quantifies how prepared your enterprise data is for GenAI and RAG use cases. DeepRoot's DRI scores data across seven dimensions — quality, understandability, structural integrity, value metrics, fairness, governance, and AI fitness — producing a numeric readiness score per data source, per department, and across your entire enterprise. The output includes diagnostic insights and a prioritised action roadmap, telling you exactly what to fix, and in what order, before deploying AI.

How long does a data readiness audit for RAG take? +

With DeepRoot's automated DRI assessment, a comprehensive enterprise data readiness audit takes 2–4 weeks. The process uses metadata-based ingestion — it profiles your data systems without moving any actual data — and produces a scored readiness report within 14 days. Manual audits conducted by internal teams typically take 6–12 weeks and produce qualitative assessments rather than quantified, actionable scores.

What data quality issues affect RAG performance most? +

The five most damaging data quality issues for RAG performance are: (1) inconsistent document versioning — multiple versions of the same document in the knowledge base cause arbitrary retrieval conflicts; (2) missing or inconsistent metadata — preventing accurate, timestamped chunk retrieval; (3) stripped access control metadata during vector store ingestion, creating security and compliance gaps; (4) schema mismatches across source systems that corrupt cross-system reasoning; and (5) semantic misalignment between internal document language and the natural language queries your users actually ask.

Can I deploy RAG without fixing all data quality issues first? +

Yes, and this is often the right strategy. A DRI audit doesn't mean you have to fix everything before building anything. It tells you which data sources are RAG-ready now versus which require remediation first. The recommended approach is to deploy your first use case on your highest-scoring data — demonstrating production value quickly — then use that success to fund systematic remediation and expansion into lower-scoring data sources. Trying to fix all data issues before deploying anything is a common path to never deploying at all.

What does a data readiness audit cost compared to mid-project discovery? +

According to Deloitte's 2026 analysis, organisations that skip upfront infrastructure audits routinely overspend the rest of their AI implementation by 40–60%. For a medium-to-large enterprise RAG deployment, discovering data problems mid-implementation costs $100,000–$380,000 in remediation work and adds 3–6 months to timelines. An upfront DRI audit — which takes 2 weeks and requires no data movement — typically costs a fraction of that and prevents the majority of those downstream costs.