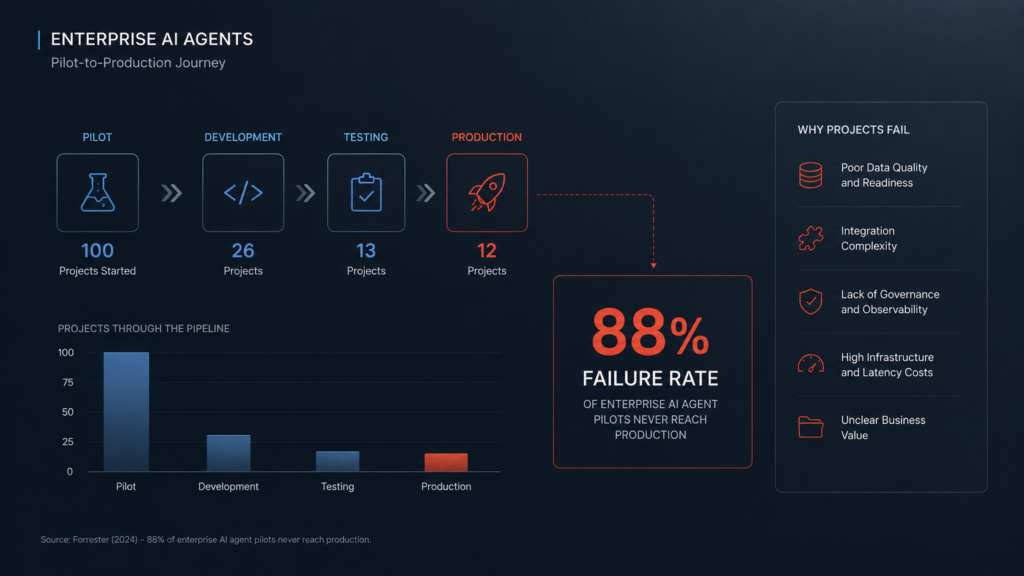

Why 88% of Enterprise AI Agents Never Reach Production — And What the 12% Do Differently

Gartner predicts over 40% of agentic AI projects will be canceled by 2027. The root cause is not the model. Here is what is really breaking enterprise AI, and the technical data governance framework that fixes it.

The Pilot-to-Production Chasm: Why Good Demos Don't Scale

Your agentic AI pilot impressed every stakeholder in the room. The demo was flawless. Six months later, it is still sitting in a staging environment, burning cloud budget and going nowhere. The CTO is asking why the ROI hasn't materialized, and engineering teams are scrambling to explain why the system keeps breaking.

You are not alone in this cycle. It is, statistically, the norm. According to recent IDC research, 88% of AI agent Proof of Concepts (POCs) never graduate to production deployment. Deloitte’s latest technology trends report confirms this crisis, revealing an 89% pilot-to-production failure rate across enterprise environments. Furthermore, Gartner predicts that over 40% of agentic AI projects will be canceled entirely by the end of 2027 due to escalating costs, unclear business value, and inadequate risk controls.

"Every enterprise has an AI demo that impressed the board. Almost none have AI running in production. The model works on someone's laptop. That is where it stays."

Enterprise Platform Engineering ConsensusThe uncomfortable truth is that engineering teams have been chasing the wrong problem. Leadership conversations focus heavily on which foundation model to choose—GPT-4, Gemini 1.5, Claude 3.5, or Llama 3. But the LLM is rarely the point of failure. The failure point is everything surrounding it: the missing production architecture, legacy system bottlenecks, and unstructured data.

The Reality of Demo Environments vs. Messy Infrastructure

The gap between a working pilot and a production system is wider for agentic AI than for any previous technology wave. A pilot typically runs on clean, curated data within a bounded sandbox. It is tightly scoped.

Production means messy, unstructured data, highly concurrent user requests, edge cases your team never anticipated, and strict regulatory compliance. When you are orchestrating autonomous workflows, a simple pilot masks fundamental issues: latency under load, broken API handoffs, and the dangerous reality of deploying "black box" agents without structural governance.

The MIT NANDA Initiative found that 95% of generative AI pilots fail to produce measurable financial impact. The gap between "deployed" and "delivering ROI" is where enterprise value leaks, driven entirely by organizational friction and lack of infrastructure.

Here is why this matters more for autonomous agents than standard chatbots. When an LLM interface produces a hallucination, a human reads it, ignores it, and moves on. But when an AI agent makes a flawed decision based on poorly governed data, it executes an API call. It updates an ERP database. It routes a financial transaction. Without a robust orchestration layer tracking decision provenance, a single silent error propagates through downstream systems instantly.

DeepRoot: The Technical Architecture for Production Agents

DeepRoot by Innoflexion is an enterprise AI infrastructure platform designed specifically to bridge the technical gap between local prototyping and secure, scalable production environments.

Before a single vector embedding is created, DeepRoot’s DRI connects to your existing data lakes and warehouses via read-only connections. It runs deterministic algorithms to evaluate schema stability, data staleness, and missing value thresholds.

D.A.V.E. (DeepRoot AI Virtual Expert) replaces fragile hardcoded SQL queries with an intelligent semantic layer. It maps natural language intent directly to structured database architectures, proactively flagging schema drift before it breaks downstream agent tasks.

A comprehensive command center to build, deploy, and monitor autonomous agents. It provides engineering teams the tools to construct complex workflows while delivering real-time visibility into the exact enterprise data sources each agent accesses.

Unmonitored autonomous agents create severe shadow-IT risks. The Agent Registry provides a centralized control plane for all deployed agent pods, tracking state transitions, input/output payloads, and tool-use permissions.

Your proprietary IP and customer data are your core differentiators. DeepRoot’s Walled Garden ensures that sensitive telemetry and vector embeddings never leave your network boundaries.

The Engineering Imperative for 2026

The window for competitive advantage in enterprise AI is narrowing. Organizations that treat agentic AI as just another SaaS implementation will fail. Success requires treating AI agents as modular microservices that demand rigorous CI/CD pipelines, strict telemetry, and an ironclad data foundation.

DeepRoot exists precisely for this moment — not as another conversational UI, but as the underlying technical infrastructure that determines whether your autonomous agents scale securely, or fail in the sandbox.

Frequently Asked Questions

Why do most enterprise AI pilots fail to reach production?

What is a Data Readiness Index (DRI)?

What is DeepRoot and how does it help enterprises deploy AI agents?

Ready to Cross the Production Gap?

Stop letting bad data and blind execution stall your AI initiatives. DeepRoot AI provides the exact infrastructure, governance, and visibility needed to move your autonomous agents into secure, scalable production.